Understanding science funding in tech, 2011-2021

March 2, 2022

For those who sit between science and tech, it’s hard not to notice the proliferation of new initiatives launched in the last two years, aimed at making major improvements in the life sciences especially.

While I don’t have a science background, nor any personal relationship to the space (other than knowing and liking many of the folks involved), I became interested in learning why the space changed so suddenly, particularly from a philanthropic lens. Figuring out what worked in science can help us tackle other, similarly-shaped problems in the world.

To understand what happened, I looked at examples of science-related efforts in tech over the past ten years (roughly 2011-2021). I looked for patterns that would help me extrapolate the norms and values of the time, as well as inflection points that shifted those attitudes. I also interviewed a number of people in the space to help me fill in the gaps, as well as to understand what they value and what success looks like.

A caveat: It’s rare, if not impossible, to produce clean answers to messy questions like “why did this culture change,” so please treat this piece as a starting point for further research.

If you don’t want to read the whole post, you can jump to the summary.

Table of Contents

- Problems in science

- Defining a theory of change

- Science innovation via startups (2011-2014)

- Early philanthropic approach (2015-2017)

- Field building and new institutions (2018-2021)

- Why are there so many new initiatives today?

- Measuring success

- Epilogue: DeSci and new crypto primitives

- Summary

- Further reading

Problems in science

When people say they want to “do science better,” which issues are they trying to address, and how?

There are several observations that seem to be widely recognized by those who work in and around science. These topics have been covered extensively and in greater detail elsewhere, so I’ll touch on them only briefly:

As a scientist, getting funding is slow and bureaucratic

The popularity of Fast Grants, a rapid grants program started in response to the COVID-19 pandemic, illustrates the lack of options for scientists. Its creators note in a retrospective that they were surprised at how many applicants came from top twenty research institutions: “We didn’t expect people at top universities to struggle so much with funding during the pandemic,” yet 64% of respondents to a survey sent to grant recipients said that their work wouldn’t have happened at all without a Fast Grant.

The rewards system in academia, while robust, doesn’t select for the best work

Scientists are expected to publish their findings in journals, and their reputation can be measured by citations. But peer review selects for consensus rather than risk-taking, and scientists feel pressured to publish for quantity rather than quality, among a host of other concerns.

Early-career scientists are at a disadvantage

Science is trending older and towards scientists with demonstrated experience. Most NIH grant funding goes to older scientists, and the age of a Nobel Prize-winning discovery by scientists is increasing.

Defining a theory of change

Why do any of these problems matter? If we had to come up with a “so what” for the observations above, we might say that, due to these systemic challenges, scientific progress is not as robust as it could be. Compared to other historical periods, such as the Victorian or Cold War era, it seems difficult for promising, talented scientists to pursue their work today, particularly when their ideas are experimental or unproven.

New Science founder Alexey Guzey notes in a 2019 investigation of the life sciences that scientists have learned to work around some of these problems by, for example, applying for grants with their “boring” ideas, then earmarking a portion of that to fund their “experimental” ideas. Regardless, it’s reasonable to assume that there is even more work that could be accomplished if scientists didn’t have to engage in such gymnastics. From the aforementioned Fast Grants survey, for example, 78% of respondents said that they would change their research program “a lot” if they had access to “unconstrained, permanent funding.”

If we had to write a tech-flavored theory of change for science, then, it might look something like this:

Ensure that scientific progress can flourish by removing financial and institutional obstacles for the world’s best scientists, so that they can fully pursue their curiosity and produce research that finds its way into applications that benefit humanity.

Within this statement, there are differences among practitioners regarding which activities they think are most important:

- Some people I spoke to believed that lack of science funding, or slow funding processes, are the biggest lever for impact: give scientists money and let them run with their ideas.

- Others felt that academic norms are the bigger obstacle: research should operate more like startup culture.

- Still others believed that there’s a divide between those who are focused on basic research, versus those who want to apply that research: bring research to market faster, so humanity can benefit from scientists’ work.

I’ll cover some of these approaches in more detail in the sections that follow.

Science can also be viewed as a subset of a broader problem statement: “How do we support research culture in tech?” Artificial intelligence, for example, falls under this umbrella, but with a different trajectory and history of funding. Ditto human-computer interaction (HCI) and “tools for thought.” Even “science,” itself, is an extremely broad category, as we’ll see in the following sections (note that the particular focus on improving science processes is sometimes called “metascience”).

In this case study, I’m only looking at how science research overlaps with tech in the last ten years. In many cases, however, tech’s sentiment towards research also affects how we think about science, and vice versa, which I’ll occasionally touch on here.

Now that I’ve gotten those caveats out of the way, let’s look at what today’s practitioners have in common. Revisiting the theory of change above, what’s unusual or significant about the tech-native approach to science?

One aspect that stands out, to me, is the focus on supporting and attracting top science talent. There’s an underlying assumption here that the quality of individual scientists matters, and perhaps even that science makes leaps and bounds thanks to the contributions of a talented few, rather than the community at large. (A meta-analysis by José Luis Ricón appears to support this hypothesis, although he notes these conclusions may vary from field to field.)

The focus on “top talent” feels very tech-native to me, similarly to how founders think about startups. While no meritocracy is perfect, tech culture thrives in part because companies tend to place less emphasis on markers like pedigree or years of experience, and more emphasis on what someone has actually done. Prioritizing high-quality talent also helps organizations stave off decline as they grow. It is unsurprising, then, that tech would bring that same mindset to science.

Secondly, there’s a consistent emphasis on output, particularly bringing research to market. Again, this “results-driven” approach feels very tech-native to me: a belief that basic research should ultimately serve a long-term purpose that benefits humanity – and that we should try to shorten that timeline as much as possible.

Most people I spoke to believe that if you can commercialize your work, you should – with the caveat, of course, that not everything can be commercialized. Even nonprofit science initiatives tend to emphasize values that are startup-inspired, such as speed, demonstrated credentials, and collaboration.

Finally, there’s an implicit belief among practitioners today that change is exogeneous: we must work outside of institutions, exerting influence from the outside in, to accomplish these goals. While some organizations do partner with universities, for example, they still operate outside of a traditional academic career path.

These values might seem obvious to those who work in tech, but if we return to the high-level vision of “Ensure that scientific progress can flourish,” applying these values eliminates a number of options that other, non-tech practitioners might pursue: for example, establishing postdoc programs, improving tooling at university research labs, increasing enrollment in STEM graduate programs, etc.

With these values in mind, let’s look at how science funding has evolved in tech over the last decade.

Science innovation via startups (2011-2014)

A common theme I heard from my conversations is that the science problem statement hasn’t changed significantly in the past ten years. There’s long been a shared awareness that science wasn’t working as well as it could be, and a desire to do something about it. What has changed, however, are views around how the problem is addressed.

A decade ago, most people felt that startups were the best way to make progress in science: either start a company, or fund companies.

A philosophical foundation for science progress at the time could be found in economist and writer Tyler Cowen’s book, The Great Stagnation, published in 2011. Cowen put forth a broader thesis about America’s economic stagnation, but he pointed to a lack of scientific breakthroughs, and a generally slowing rate of technological progress, as one of its causes. [1]

Peter Thiel, to whom Cowen dedicates the book, was outspoken about declining scientific innovation. In The Great Stagnation, Cowen quotes an interview with Thiel, who states that: “Pharmaceuticals, robotics, artificial intelligence, nanotechnology—all these areas where the progress has been a lot more limited than people think. And the question is why.”

It was around this time, in 2011, that Thiel also adopted the now-infamous tagline for his venture capital firm, Founders Fund, founded in 2005: “We were promised flying cars, instead we got 140 characters.” Thiel’s decision to turn this statement into an investment thesis reveals his theory of change: that science progress would be addressed through the markets, rather than by funding basic research.

While it’s hard to pinpoint why startups were the favored approach to science at the time, the simplest answer is that it correlates with the popularity of startups more generally in the 2010s. Y Combinator, the accelerator that played a major role in making startups both more attractive and easier to start, was founded in 2005, but hit its cultural stride in the 2010s. Many of its most successful alumni came from companies that started in, or achieved breakout growth, in the 2010s. Marc Andreessen’s 2011 op-ed, “Software is eating the world”, captured the sentiment of the time: that software-enabled startups could be applied towards solving many different problems across industries.

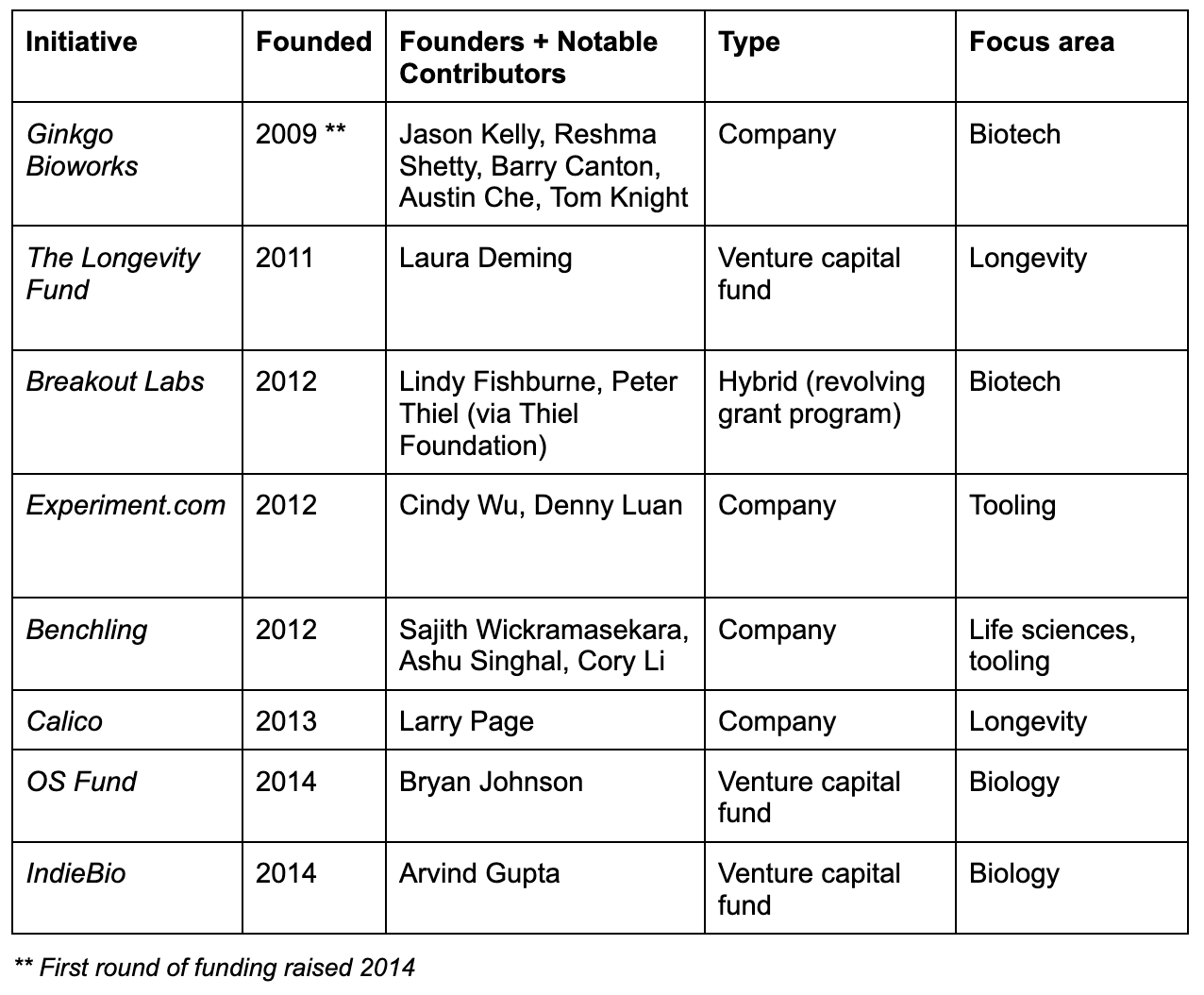

With the exception of Breakout Labs (which, although a grants program, was structured as a revolving fund, with revenue deriving from grantees’ IP and/or royalties), notable science efforts of the time were usually startups or venture capital funds. Examples include:

Outside of startups, tech had two notable research patrons at the time, which were more adjacent to science, but tell us something about how research was regarded:

- Google X: Google X quietly launched in 2010, described by the New York Times, which first uncovered its existence, as a secret lab within Google tackling “shoot-for-the-stars ideas”. Google X popularized the term “moonshots” and now describes itself as a “moonshot factory”.

- MIT Media Lab: MIT Media Lab describes itself today as an “interdisciplinary research lab.” While not focused on science, it was frequently referenced as a symbol of tech x academia research culture. It flourished in the 2010s under the direction of its charismatic leader, Joi Ito, until he resigned abruptly in 2019 in light of controversial financial ties.

Early philanthropic approach (2015-2017)

By the mid-2010s, enough personal wealth had been generated from tech exits in the first half of the decade, leading some funders to experiment with traditional philanthropic approaches.

In 2015, Y Combinator announced the formation of a nonprofit research institute, YC Research, initially funded by a personal $10M donation from its president, Sam Altman. While not directly addressing science (their first research programs focused on universal basic income, cities, and HCI), YC Research can be understood as a bellwether for changing cultural attitudes. As Sam Altman explained in his announcement post, sometimes “startups aren’t ideal for some kinds of innovation,” a wholly new sentiment at the time:

Our mission at YC is to enable as much innovation as we can. Mostly this means funding startups. But startups aren’t ideal for some kinds of innovation—for example, work that requires a very long time horizon, seeks to answer very open-ended questions, or develops technology that shouldn’t be owned by any one company.

However, he emphasized that YC Research still aimed to do things differently from typical research institutes (emphasis mine):

We think research institutions can be better than they are today….Compensation and power for the researchers will not be driven by publishing lots of low-impact papers or speaking at lots of conferences—that whole system seems broken. Instead, we will focus on the quality of the output.

In the same year, Mark Zuckerberg and Priscilla Chan announced that they were donating 99% of their Facebook shares towards philanthropic initiatives, housed under the Chan Zuckerberg Initiative. Like Y Combinator, Chan and Zuckerberg chose to do things a little differently, structuring CZI not as a 501c3 nonprofit (like most philanthropic foundations), but as an LLC, which they believed would give them “flexibility to execute our mission more effectively.”

CZI’s first investment was a $3B pledge to “cure, prevent, and manage all human diseases in our lifetime”, to be allocated over ten years. $600M of that pledge was earmarked for the creation of Biohub, a research center located at University of California in San Francisco (UCSF), in partnership with Stanford University and the University of California in Berkeley.

In their joint announcement, Zuckerberg explained that the lack of progress in life sciences was tied to how science was currently funded and organized (emphasis mine):

Building tools requires new ways of funding and organizing science….Our current funding environment doesn’t really incentivize that much tool development….Solving large problems requires bringing scientists and engineers together to work in new ways: to share data, to coordinate and collaborate.

The following year, in 2016, Sean Parker launched the Parker Institute for Cancer Immunotherapy. Parker’s announcement, again, echoed similar concerns with how science was done (emphasis mine):

The problem of cancer is not simply a question of resources, it’s a question of how we allocate those resources….The system is broken somehow….The agencies responsible for funding most scientific research generally don’t encourage scientists to pursue their boldest ideas, so we don’t get ambitious science.

In contrast to the first half of the 2010s, this period saw an emerging interest in funding basic research, and a new, tacit acknowledgment that startups won’t get us all the way there – even while donors highlighted the importance of innovating on research culture itself, with a more tech-flavored focus on output, collaboration, and tool development.

A few other initiatives that launched around the same time, which also reflect these trends, include:

- Open Philanthropy: A research and grantmaking organization that’s more generally focused on improving philanthropy, but whose initial focus areas included funding biological research . Open Philanthropy became an independent organization in 2017, but grew out of a collaboration between Good Ventures (Dustin Moskovitz and Cari Tuna) and Givewell over several preceding years.

- OpenAI: A nonprofit organization, initially described as a “non-profit research company,” launched in 2015 with a $1B pledge from Elon Musk, Sam Altman, and others. (OpenAI has since transitioned to a for-profit structure.) While not focused on science, OpenAI became one of the largest research efforts to launch in tech in recent years. Their initial announcement emphasized the importance of open publishing, open patents, and collaboration.

One thing that appears to be missing from this period – despite a professed interest in improving collaboration among researchers – is coordination between donors. Rather, one has the sense that each effort is centered around the donor themselves, instead of working together to address a clearly-defined problem through multiple approaches.

This is not intended as a critique, but to highlight how early major donors were still learning how to strategically tackle science through non-startup approaches – as well as the very difficult challenge of defining their philanthropic work outside of traditional expectations – compared to today’s cohort.

Field building and new institutions (2018-2021)

In recent years, funders and founders became much more tightly coordinated with each other, which helped catalyze a burst of new science initiatives.

A 2017 NBER working paper, “Are Ideas Getting Harder to Find?”, which suggested that “research effort is rising substantially while research productivity is declining sharply,” prompted renewed conversation about scientific innovation. In 2018, Patrick Collison and Michael Nielsen published an op-ed in The Atlantic, containing original research that made a similar argument: while there were “more scientists, more funding for science, and more scientific papers published than ever before…are we getting a proportional increase in our scientific understanding?”

The following year, Patrick Collison and Tyler Cowen published a related Atlantic piece, “We Need a New Science of Progress”, suggesting that “the world would benefit from an organized effort to understand” how progress is made, including identifying talent, incentivizing innovation, and the benefits of collaboration.

While their op-ed focused on progress more broadly, science was a prominently featured example. Collison and Cowen stated that, “[W]hile science generates much of our prosperity, scientists and researchers themselves do not sufficiently obsess over how it should be organized,” and “critical evaluation of how science is practiced and funded is in short supply, for perhaps unsurprising reasons.”

The Atlantic op-ed (combined with plenty of follow-up efforts) led to the formation and growth of a “progress studies” community, which provided a much-needed intellectual home and community for people who, among other things, were interested in scientific progress.

While today’s practitioners in science are not formally affiliated with progress studies (and most would probably say they are not), and while progress studies is concerned with many other questions beyond science, my sense is that the formation of such a community helped: [2]

- Serve as a Schelling point for likeminded people, attracting more talent to the space, and

- Legitimize the work of practitioners.

In 2021, a group of people got together for a face-to-face “Bottlenecks in Science and Technology Workshop”, under the premise that bottlenecks “are present across science and technology, and solving them could unlock great progress for an entire field.” Attendees were a mix of both founders and funders, many of whom were already working on science-related initiatives, including Fast Grants, Convergent Research, and Rejuvenome.

The workshop was well-received by attendees. It helped more people meet and get to know each other, strengthened a shared approach and interest in the space, and even inspired new collaborations.

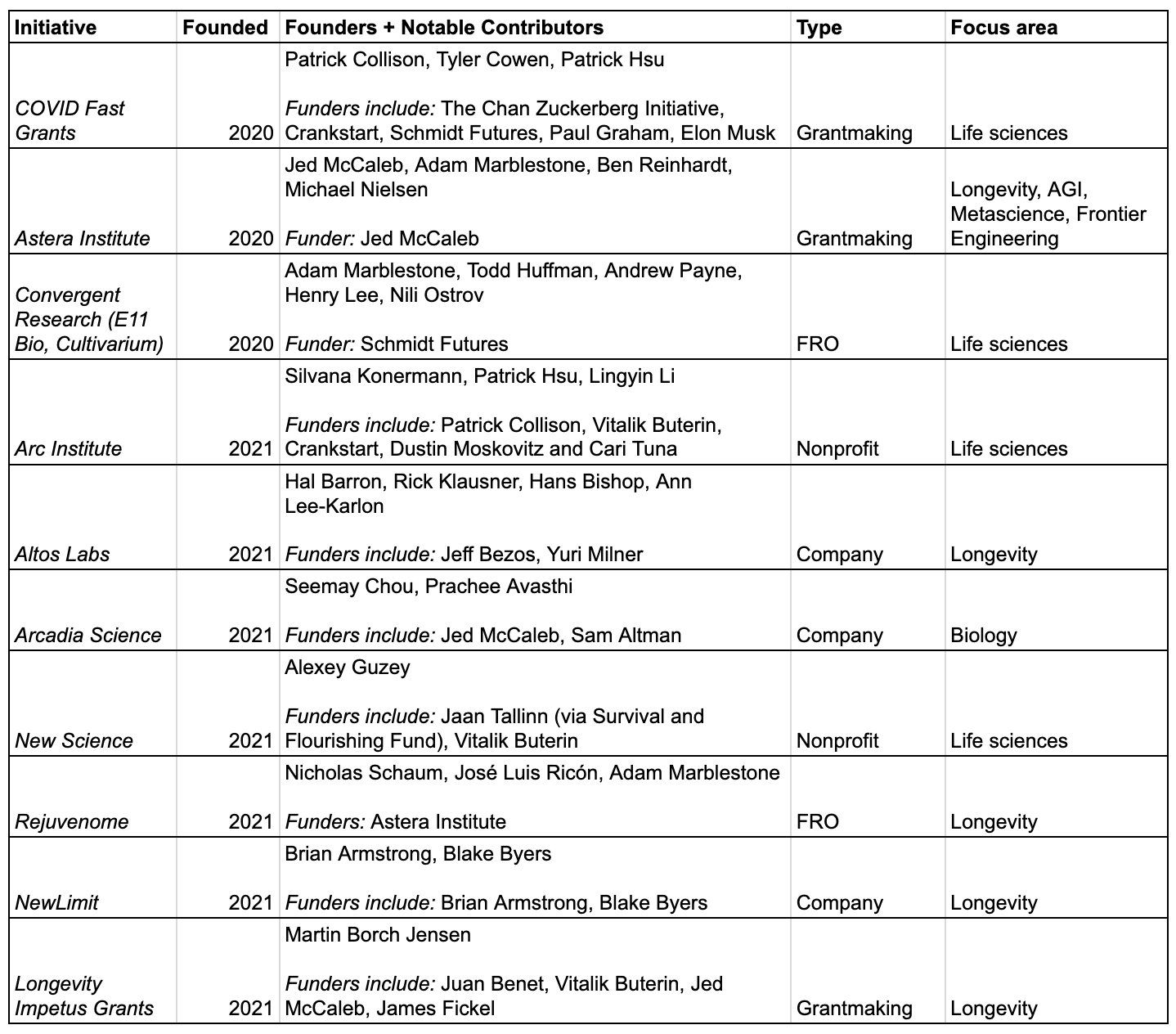

Here are some of the science initiatives that launched in recent years. Especially notable is the diversity of experiments within a shared problem space, combined with increased coordination between funders and founders (note the amount of overlap across initiatives). Compared to the more monolithic, siloed approaches of the 2010s, these are all signs of a healthy, flourishing space.

Most of these initiatives are focused on life sciences. I asked several people why they felt this was the case. A few ideas include:

- Personal connections and interests: Several funders and founders had preexisting connections to, or background in, the life sciences.

- Storytelling and public narrative: Life sciences means tackling issues like curing diseases, life extension, fertility medicine, and genetics. The benefits of pursuing such work can be easily understood by the general public (compared to, say, existential risk or space exploration), especially in the wake of a global pandemic.

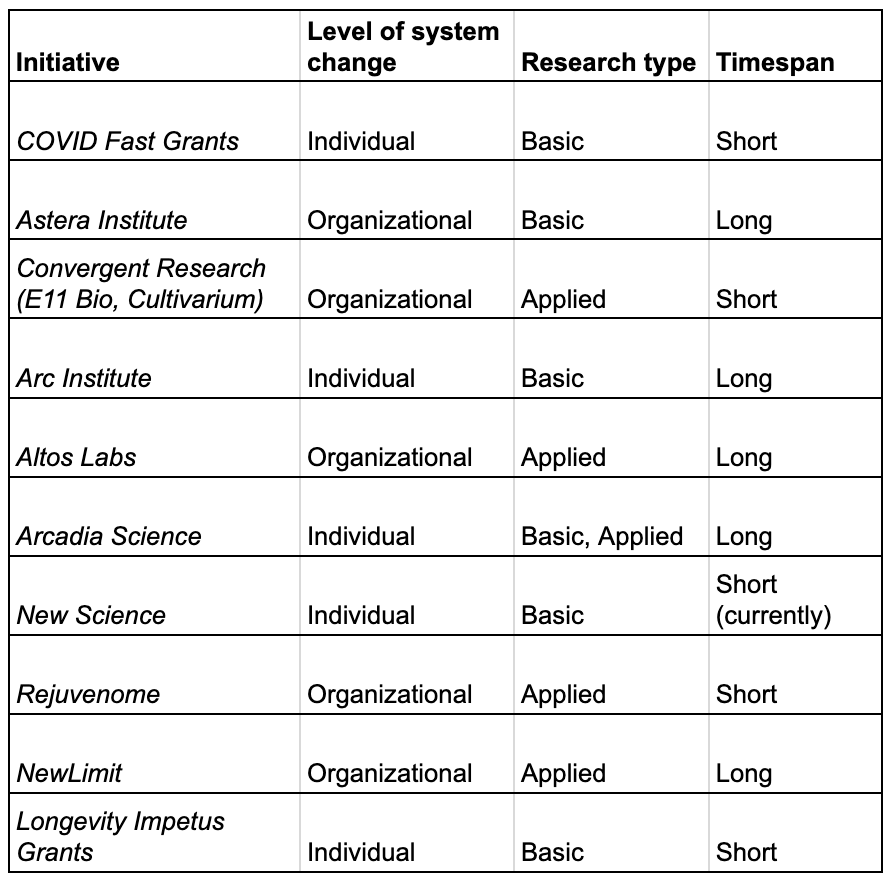

As previously observed, this cohort is marked by a diverse set of approaches: a mix of for-profit and non-profit pursuits, as well as both grantmaking and operating organizations. We can also note a diversity of approaches in terms of level of system change (organizational vs. individual), type of research (basic vs. applied), and program timespan (short vs. long).

Why are there so many new initiatives today?

While there has been a consistent group of eager practitioners in science for quite some time, it is only the recent influx of funding that’s made it possible to put some of these longstanding ideas into practice. (For example, Adam Marblestone and Sam Rodriques had been thinking about Focused Research Organizations for many years before it was successfully funded.)

Some funders prefer to downplay their role as “capital providers,” but I think it’s important to highlight the importance of good funder practices. Specifically, I’d emphasize how, far from “throwing money at a problem”, science funders in tech today have taken a strategic, yet classical philanthropic approach to establishing a new space. Two major efforts that were especially useful to lay the groundwork:

- Better coordination: Increased coordination and co-funding among funders, which helps them learn from each other and make bigger bets, as well as making practitioners feel secure pursuing long-term work;

- Field building: Signaling that these are interesting and worthy problems, which attracts others to the space and legitimizes practitioners’ work.

What led to the renewed interest in funding science in the first place? A few likely factors, some of which are external conditions, and others the result of deliberate effort:

A global COVID pandemic

By forcing people to grapple with large, unyielding systems, the pandemic helped us realize that the world was more malleable than it had previously seemed. People were frustrated by bureaucracy, unable to opt out of that bureaucracy, and realized they could act now – rather than in some distant future – to make things better. [3]

Fast Grants was launched in direct response to COVID, and its success appears to have informed the vision of Arc Institute. Longevity Impetus grants were also inspired by the Fast Grants model, but with a different thematic focus.

Arcadia Science’s founders directly point to the COVID-19 pandemic as having “ignited feelings of urgency, collaboration, and enthusiasm for scientific progress extending beyond our typical circles. The resulting vaccine developments demonstrated just how powerful science and collaboration among scientists can be.”

One person I spoke to thought that COVID may have also had the effect of breaking up Silicon Valley groupthink, due to everybody geographically scattering to other places, which exposed people to new ways of thinking and made them more amenable to non-startup approaches.

Successful field building and better coordination between participants

Publishing op-eds, hosting workshops, and forming a progress studies community made it easier for likeminded people to find and coordinate with each other. As Luke Muehlhauser notes in his Open Phil report on early field growth, while these methods may seem “obvious,” they also “often work.”

In my conversations, longtime practitioners commented that people have been interested in this problem space for many decades, but only in recent years were they surprised to find that there were (quote) “more people like us than I realized.”

And even among practitioners who’ve known and worked with each other for years, field building had the effect of making their work feel higher-status than before – more like being a startup founder – which will continue to attract others to the space.

Several people commented on this effect in our conversations. One person said that these types of projects (i.e. starting an ambitious project that’s not a startup) were considered “non-fundable” until recently, because a few people have now “made it cool.” Another felt that while the typical person in tech might not yet understand what they were doing, they felt that their work is no longer perceived as “low-status.”

The crypto wealth boom

2017 and 2021 were two major inflection points for crypto wealth generation. We’re starting to see the downstream effects of the first boom, and will likely see the effects of the second boom in the next few years.

Crypto has had both direct and indirect effects on the science funding space. Firstly, in practical terms, it created a new wave of potential funders. [4] Today’s active crypto funders in science are mostly beneficiaries of the first 2017 boom – just as, e.g. Mark Zuckerberg, Dustin Moskovitz, and Sean Parker were beneficiaries of Facebook’s 2012 IPO and became active philanthropic funders several years downstream.

Secondly, crypto wealth became a forcing function for “trad tech” to take bigger risks with culture-building. While it’s hard to prove that this is true, we can think of it as a shifting of the Overton window, where the appearance of a group with way more extreme views than the median can make previously radical-seeming positions seem reasonable to pursue. In tech’s case, the fact that crypto is a sector that nonironically wants to rebuild society from the ground up makes it seem much less strange to, say, launch a new 501c3 research institute.

There are several other macro conditions that likely contributed to a shift in tech’s appetite for funding new science efforts: a bull market that made capital cheap; an accelerating disillusionment with legacy institutions among the general population; a wave of liquidity events in the late 2010s that generated new wealth; and a radical shift in tech’s relationship to mainstream culture that started in the mid-2010s. These topics are beyond the scope of what I’d like to cover here, but worth noting as other contributing causes.

Measuring success

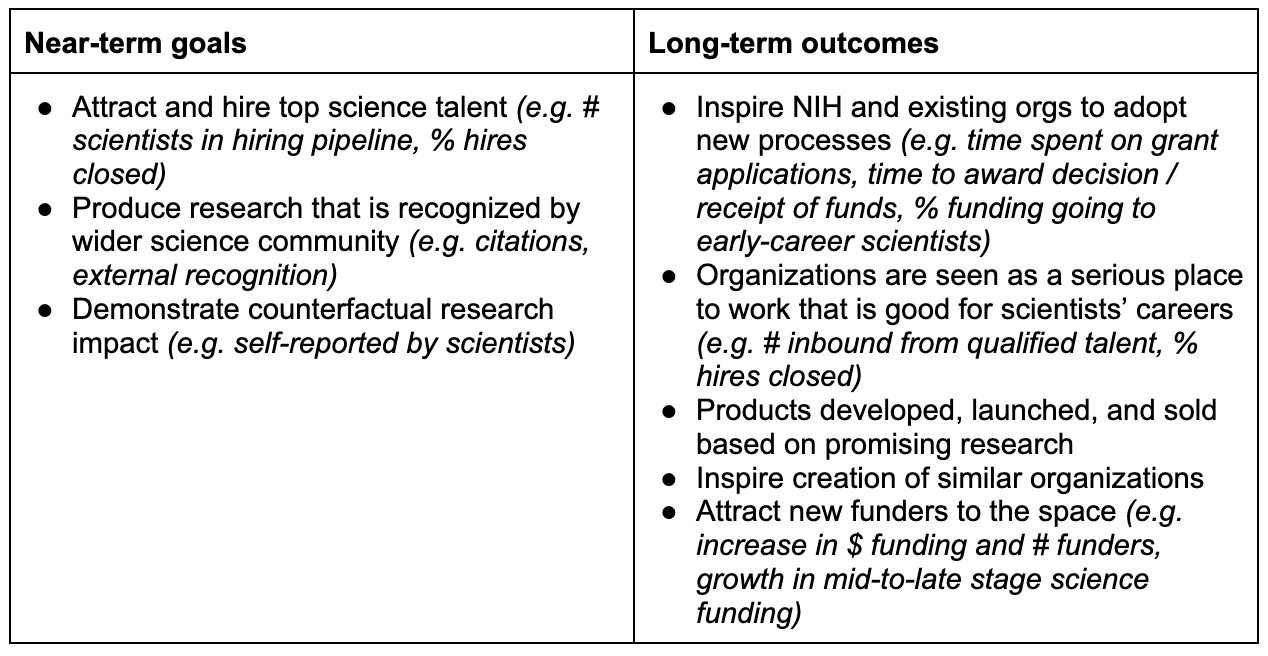

Finally, I wanted to understand how participants in today’s cohort think about measuring impact. A decade from now, how will we know if these efforts were successful or not?

Nearly everyone I spoke to referenced some version of “the $100B problem” (a term attributed to David Lang), referring to the fact that private capital is relatively small compared to federal R&D funding, which in the United States amounts to $100B+/year. The latest wave of initiatives, based on what we can surmise, represent something on the order of several billion dollars total. While significant, it’s a fraction of what the government can do.

As a result of these relative financial constraints, the participants I spoke to were instead thinking about how they might inspire improvements in federal funding (particularly at the NIH, for life sciences) by demonstrating what’s possible, rather than trying to compete dollar-for-dollar. This approach aligns with the role of philanthropic capital in civil society more generally, where the goal is not to compete with or replace government, but to seed new ideas through private experiments that don’t detract from public tax dollars. [5] Public libraries, public schools, and universities, for example, were all shaped by early philanthropic work in the United States.

Practitioners who started companies instead of nonprofits are similarly motivated by a desire to extend the life of their capital. If a company is successful, it can inspire the creation of other science companies, because there is plenty of startup funding available. By contrast, successful nonprofits don’t tend to inspire the creation of more nonprofits (even if they influence each others’ practices and interests), because philanthropic capital is limited, which fosters a more competitive, zero-sum landscape.

Here are a few near- and long-term goals that I heard in my conversations, as well as suggestions for how they might be measured.

Epilogue: DeSci and new crypto primitives

There’s one more chapter to this story, which I’ve put into its own “epilogue” section because it’s both new and markedly different from the approach above, but also serves as an important foil to everything we’ve covered so far.

If we zoom out and consider how science is funded and supported, there are multiple approaches we could take. Public goods aren’t solely funded by governments; they can also be influenced by markets (i.e. starting companies) and philanthropic capital. The examples we’ve looked at so far, regardless of how new or different they may seem, fall into one of these existing buckets.

There is another, more radical approach, which I’ll (awkwardly) call the crypto-native approach. Proponents of such an approach argue that the aforementioned efforts, while a positive development, ultimately replicate the same problems of our existing legacy systems. Creating new institutions without rewriting their underlying incentives, they’d say, fails to solve anything in the long run: it simply resets the timer on institutional decline.

Even among the “trad tech” cohort, there is a wide range of answers to the question of “Are we trying to create new public institutions, or merely make existing ones better?” Several initiatives are thinking long-term about how to avoid institutional decline, such as capping their funding or even organizational size. Either way, most people I spoke to seem to resonate with the “$100B problem” approach: that is, efficiently deploying limited amounts of capital to effect change at the much larger federal level.

By contrast, in a crypto-native approach, proponents hope to create entirely new ways of funding public goods. While they share the same long-term vision of improving scientific progress, as well as attracting top talent and bringing research to market, their strategies are different. Their theory of change might look something like:

Ensure that scientific progress can flourish by inventing new ways to reward scientists, improve collaboration, and assess and amplify the quality of their work, so that they can fully pursue their curiosity and produce research that finds its way into applications that benefit humanity.

In my conversations, I heard near-verbatim statements made by those espousing different approaches, to the effect of “The current systems in academia, research, and government are designed to produce a certain set of outcomes. Unless we invent new games, nothing is going to change.” Among trad tech, however, it seems that the new games are creating new institutions (but the underlying organization principles are assumed to be static), whereas among crypto, it’s designing new incentive systems entirely (where organizing principles are assumed to be malleable).

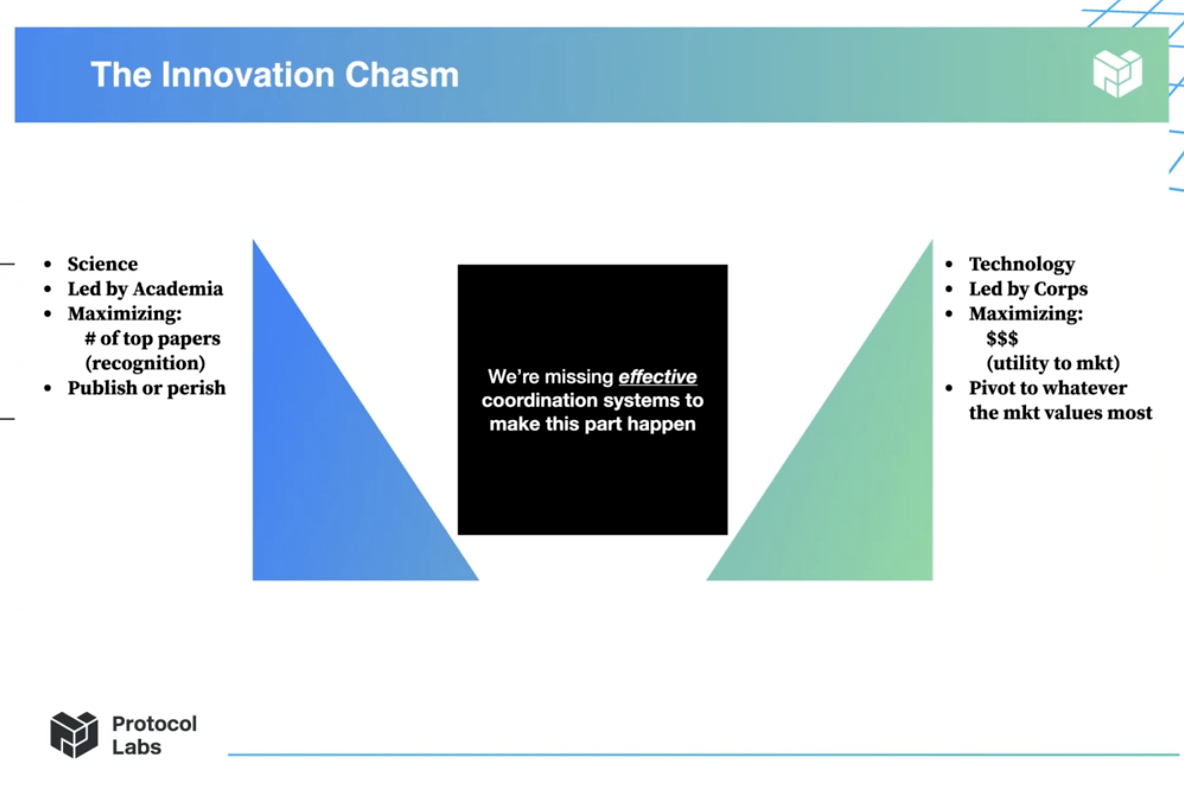

At Funding the Commons, a virtual conference hosted in 2021 by Protocol Labs on funding public goods, founder Juan Benet gave a talk about “closing the innovation chasm.” He noted that in the last decade, the startup ecosystem has yielded significant R&D innovation by productizing new technologies. From his perspective, Y Combinator has contributed significantly more to R&D innovation than either Alphabet or Ethereum.

Source: Juan Benet, via Funding the Commons.

Source: Juan Benet, via Funding the Commons.

But while basic research efforts focus on fixing problems in the “blue triangle” area above, they don’t address the missing “black square”: translating research into real-world innovation. Just as the tech ecosystem has created billions of dollars in venture capital funding for startups, then, the crypto ecosystem can do the same for funding public goods.

This, to me, gets to the heart of the difference between tech-native and crypto-native approaches to solving public goods problems. In a best-case scenario, the tech approach is to generate wealth via startups, then use their surplus wealth for philanthropic means (whether through for-profit or nonprofit initiatives). The crypto approach, on the other hand, is to create a native funding system for public goods, so that participants can generate wealth through the development of public goods themselves. [6]

Vitalik Buterin also gave a talk at Funding the Commons that echoed these sentiments. He explains that blockchain communities are built more on public goods than private goods, such as open source code, protocol research, documentation, and community building. He therefore emphasizes that “Public goods funding needs to be long-term and systematic,” meaning that funding needs to come “not just from individuals, but from applications and/or protocols.” New crypto primitives can help address those needs, such as DAOs or token awards.

A few differences between crypto- and trad tech-native approaches:

- Belief in limited vs. uncapped upside. Whereas those in trad tech recognized the limitations of the $100B problem, crypto takes a much wider view of what’s possible. One person I spoke to thought that crypto networks could rival federal funding levels in the next decade. A new set of crypto primitives would also make it possible to significantly increase financial rewards for scientists. Whether this is achievable or not, I find this belief in uncapped upside to be inspiring.

- Centralization vs. decentralization of talent. As previously mentioned, trad tech seems to focus their efforts on helping the really good scientists who are slowly being destroyed by an ailing bureaucracy. Crypto, on the other hand, takes a more diffuse approach to talent, attracting and coordinating a larger network of contributors. (As one person told me: “Science progress is a coordination problem.”) With a crypto approach, the goal is to equip the world with tools that allow anyone to experiment (which eventually filters for the best talent), rather than proactively identifying and recruiting the best talent into an organization. We can think of this as an open source vs. Coasean approach to talent, which is a thematic difference between crypto and trad tech more broadly.

While trad tech and crypto offer two distinct approaches to tackling science, there is still crossover activity between funders. Funders don’t fall into one category or another based on where they work, but rather on differences in theory of change. It is possible to – and some funders, such as Vitalik, do – support both trad tech and crypto efforts, in what we might call a “diversified portfolio” approach to improving science.

Zooming in a bit further on crypto, there is an emerging movement to deploy new primitives towards science, which is sometimes referred to (in its web3-flavored form) as DeSci, or decentralized science. While not everyone identifies with this term, I’ll use it as shorthand in this section to refer to the crypto-centric approach to improving science because, well, it rolls off the tongue better.

A surprising number of DeSci practitioners have backgrounds in science. These aren’t just crypto evangelists who’ve decided to apply their skills to a new industry: there are also scientists who are leaving their positions in academia or industry to go all-in on DeSci.

Jessica Sacher, a microbiologist-turned-cofounder of Phage Directory, describes herself as having previously had a strong “analog existence”:

I came from the molecular microbiology lab bench, where I wrote out my experimental methods and data in paper notebooks (on good days; the rest I wrote on paper towels and rubber gloves). I barely used even Excel during 7 years at the bench.

Nonetheless, she was attracted to DeSci because it offered an optimistic vision she wasn’t getting in academia (emphasis mine):

[T]he more time I spend with people in the tech/startup space, the more I’m realizing that science’s problems come from manmade systems of incentives, not from fundamental truths of the universe….This may be obvious to people already [in tech], but it was not obvious to me as a biologist.

Joseph Cook, another DeSci proponent, is an environmental scientist at Aarhus University in Denmark, with a computational focus. While he, like other scientists, believes that “our current infrastructure for [scientific research] is no longer fit for purpose,” he believes “a decentralised model could be used to rewrite the rules of professional science.”

Interestingly, many DeSci participants also appear to have a life science background, or are focused on life science initiatives, like their trad tech counterparts. [7]

While the DeSci space is still emerging, here are a few examples of experiments that launched in the past year.

VitaDAO

VitaDAO is a community fund, managed by a DAO, that is “funding, and advancing longevity research in an open and democratic manner.” They have over 4,500 members on Discord and fund $25-500K-sized grants. As of January 2022, they’ve funded two projects and $1.5M worth of research.

VitaDAO’s revenue model is not dissimilar to Thiel’s Breakout Labs, but with a crypto spin: VitaDAO members own the IP from projects they fund (although they state this is negotiable), which theoretically increases financial value of the $VITA token. VitaDAO partners with Molecule, a company that describes itself as “an OpenSea of biotech IP”, which developed an IP-NFT framework to manage its IP. (Molecule is launching a similar effort for psychedelics research, PsyDAO.)

OpScientia

OpScientia is a platform that is developing a new set of research workflows that are built upon the principles of openness, accessibility, and decentralization. A few examples include: decentralized file storage for research data, verifiable reputation, and “game theoretic peer review.”

It’s again useful to compare the language of OpScientia to trad tech’s theory of change when it comes to talent; OpScientia describes itself as “a community of open science activists, researchers, organisers and enthusiasts” that’s “building a scientific ecosystem that unlocks data silos, coordinates collaboration and democratises funding.”

LabDAO

LabDAO aims to create a community-run network of wet and dry laboratory services, where members can run experiments, exchange reagents, and share data. Its founder, Niklas Rindtorff, is a physician scientist at the German Cancer Research Center in Heidelberg, Germany. LabDAO is still pre-launch, but is in active development and has nearly 700 members in its Discord.

Planck

Planck wants to improve how scientific knowledge is created and rewarded by putting digital manuscripts on a blockchain, which they call “alt-IP.” Their founder, Matt Stephenson, is a behavioral economist who sold an NFT containing an independent data analysis for $24,000.

Summary

Compared to previous years, there is now an expanded set of approaches to improving how science is conducted, thanks to:

- Changing macro conditions such as COVID, a flurry of liquidity events in tech, and the crypto boom which raised the bar on what’s possible;

- Deliberate field-building efforts (writing, community building, and convenings) to legitimize science work and attract talent to the space;

- Better coordination between funders (including co-funding opportunities) and practitioners

There are still new science startups being built today, such as New Limit, Arcadia Science, and Altos Labs. But now there are also examples of research institutes, such as Arc Institute and New Science, and there are even emerging examples of crypto-native experiments, like VitaDAO and LabDAO. It’s not that one approach replaced the other, but that there are now many more people trying different things, which is a sign of a growing, flourishing space.

Tech is still heavily dominated by startups, and will probably continue to be for a very long time. But as tech matures as an industry, and there are more extreme wealth outcomes, there is now (as one might expect) an increased interest in solving ambitious problems with philanthropic capital.

Crypto takes this one step further with the development of new primitives for public goods. They are concerned that traditional philanthropic strategies will repeat the mistakes of legacy institutions, and therefore look to develop new ways of rewarding scientists and helping them share in uncapped upside, which, if successful, could do for science (and other public goods) what startups did for venture capital.

There are fundamental differences in the crypto- vs. tech-native theories of change. Tech focuses on hiring top talent, but borrows similar reward structures from both science and startups today. Crypto takes a more diffuse, networked approach to attracting talent and is more willing to reimagine basic structures like patents, IP, and even research labs themselves. Both types of practitioners believe in working exogeneously to improve legacy institutions.

On the trad tech side, it will be interesting to see whether the first cohort of “anchor” funders manage to attract additional funders to the space. If their efforts are successful, we should expect to see:

- Scientists publishing high-quality work that’s recognized by the wider science community;

- New initiatives that continue to attract top talent, and are seen as a great place to build a career in science;

- Changes at NIH and elsewhere in the federal sector, thanks to new initiatives that demonstrate what’s possible

On the crypto side, we should watch to see whether new initiatives:

- Are able to generate and distribute funding for science work;

- Produce research that is recognized by the wider science community;

- Generate uncapped rewards (financially or otherwise) for participating scientists

I’m particularly interested in watching the tension between tech- and crypto-native approaches to unfold. While they are at different stages of maturity, at a high level, these are two major experiments playing out at the same time.

The tech story maps quite predictably to philanthropic efforts in previous decades, which is to say that it has a higher likelihood for success: it’s a pattern that people understand better. The crypto story is radically different, requiring us to reimagine what it means to fund and develop public goods from an entirely new set of assumptions. It is much likelier to fail, or to succeed only in limited contexts. But if it does succeed, the upside is unimaginably larger.

Further reading

The following is an assorted collection of artifacts that I came across during my research, in case you’d like to explore more deeply. There’s no rhyme or reason to what’s included here other than providing a glimpse, at the primary level, at how people think and talk at the intersection of science and tech/crypto.

- 2021 Bottlenecks in Science and Technology Workshop (José Luis Ricón)

- Announcing NewLimit: a company built to extend human healthspan (Brian Armstrong, Blake Byers)

- Building a knowledge graph for biological experiments (Niklas Rindtorff)

- CZI Biohub Announcement (Facebook Live video recording) (Mark Zuckerberg, Priscilla Chan)

- Distributing Innovation with The VitaDAO Core Team (Idea Machines podcast) (Ben Reinhardt and the VitaDAO core team)

- DeSci: The case for Decentralised Science (Joseph Cook)

- Focused Research Organizations to Accelerate Science, Technology, and Medicine (proposal) (Sam Rodriques, Adam Marblestone)

- Funding the Commons (November 2021 virtual conference) (Protocol Labs)

- How Life Sciences Actually Work: Findings of a Year-Long Investigation (Alexey Guzey)

- Ideas on how to improve scientific research (Brian Armstrong)

- Introducing Arc Institute (Patrick Hsu, Silvana Konermann, Patrick Collison)

- Sean Parker Launches the Parker Institute for Cancer Immunotherapy (video) (Parker Institute for Cancer Immunotherapy)

- Silicon Valley’s New Obsession (Derek Thomspon for The Atlantic)

- The Hollywood Analogy (David Lang)

- Update on Investigating Neglected Goals in Biological Research (Nick Beckstead, Open Philanthropy)

- What we learned doing Fast Grants (Patrick Collison, Tyler Cowen, Patrick Hsu)

- Why does DARPA work? (Ben Reinhardt)

- YC Research announcement post (Sam Altman)

Notes

Thanks to everyone I spoke with as background research for this post. Special thanks to José Luis Ricón and Ben Reinhardt for their insights. Acknowledgments are not endorsements; any misrepresentation of ideas or conversations in this post are my errors alone.

If you have feedback, additional context, or corrections to share, please drop me a line; I’d love to incorporate your perspective.

-

The book is oddly cheerful about this new state of things; Cowen believed that while we were currently in a period of stagnation and needed to adjust our expectations accordingly, it would probably end in a few decades. ↩

-

A similar example might be the intellectual foundation that rationalists and LessWrong provided for the artificial intelligence community. While these two communities don’t overlap perfectly, the former helped influence and legitimize the latter. ↩

-

Compare Marc Andreessen’s “It’s Time to Build” thesis in 2020, which captures this sentiment quite well, to his 2011 “Software is Eating the World” thesis. ↩

-

Also underappreciated, and rarely discussed, on the practitioner side: crypto provided many people with a modest savings cushion that enabled them to pursue crazy ideas outside of startups. ↩

-

For what it’s worth, successful startups do this, too! ↩

-

Even if the crypto-native approach succeeds in the long run as a means of funding public goods, however, it doesn’t obviate the need for philanthropy. Generating wealth via the development of public goods still creates surplus wealth that needs to be discharged. But that’s for another post. ↩

-

The explanations for “why life sciences” from the trad tech section do less of a good job of explaining why crypto practitioners also have similar backgrounds. One thought is that perhaps there are cultural differences between, say, biologists and astrophysicists, similarly to cultural differences between JavaScript developers and cryptographers, that might make them more receptive to unorthodox career paths. (EDIT: A few readers have suggested these explanations: (1) life sciences require more funding than e.g. CS or math, and yet are trapped by one major federal funding source, so they feel the pain more acutely; (2) they’re constrained by norms of publishing in paywalled journals instead of arXiv; (3) life sciences have clear potential for real-world commercial opportunities, making it attractive to private funders.) ↩